For clinicians and researchers confronting ultra-rare or undiagnosed genetic diseases, the standard diagnostic toolkit frequently reaches its limits well before an answer does. Whole exome sequencing returns a variant of uncertain significance. Panels miss non-coding regions. Families cycle through specialists for years without resolution. This guide breaks down a rigorous, stepwise research process built specifically for these cases, covering everything from initial phenotyping and genomic sequencing to functional modeling, therapeutic development, and the iterative reanalysis strategies that are now shifting diagnostic yields in programs serving the most complex patients.

Table of Contents

- Key requirements and tools for genetic disease research

- Step-by-step process: From patient referral to variant prioritization

- Innovative modeling and functional validation: Organisms, organoids, and in silico

- Therapeutic development: Gene therapy, trial design, and regulatory challenges

- Why hybrid approaches and perpetual reanalysis unlock new answers

- Connect with expert resources for rare disease research

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Comprehensive phenotyping essential | Systematic collection and description of patient features guides effective variant discovery. |

| Iterative genomic analysis boosts yield | Ongoing reanalysis and AI-powered variant prioritization increase diagnostic success rates. |

| Hybrid modeling accelerates validation | Combining in silico, organoid, and animal models creates robust evidence for therapy development. |

| Gene therapies are expanding | New technologies and regulatory pathways are driving rapid growth in ultra-rare disease therapeutic options. |

| Expert networks unlock progress | International collaboration and database sharing help uncover novel genes and connect patients with potential therapies. |

Key requirements and tools for genetic disease research

With a clear understanding of the challenge, let's look at what you need to get started.

The genetic disease research process for ultra-rare and undiagnosed diseases typically begins with patient referral and comprehensive phenotyping, followed by advanced genomic sequencing. That deceptively simple sentence contains enormous operational complexity. Each phase demands specific tools, data standards, and expertise that must be assembled before meaningful research can proceed.

Core data and clinical requirements:

- Deep phenotyping data: Human Phenotype Ontology (HPO) terms, medical histories, imaging, biochemical profiles, and family pedigrees are essential. Incomplete phenotyping is the single most common reason variant interpretation stalls.

- Genomic sequencing platforms: Whole exome sequencing (WES) captures coding regions and is cost-effective for initial screens. Whole genome sequencing (WGS) adds non-coding regions, structural variants, and repeat expansions that WES routinely misses. RNA sequencing (RNA-seq) layered on top identifies aberrant splicing events invisible to DNA-level analysis alone.

- Variant prioritization software: Tools like CADD, SpliceAI, and REVEL score variant pathogenicity computationally. Population-level databases including gnomAD provide allele frequency context. ClinVar and OMIM anchor variant classification to established disease knowledge.

- AI and machine learning platforms: Emerging platforms apply phenotype-driven algorithms to rank candidate variants against known disease signatures, dramatically reducing manual review burden.

- ACMG guidelines: The American College of Medical Genetics and Genomics classification framework remains the standard for variant pathogenicity assessment and must be integrated at every interpretive step.

| Requirement | Tool or resource | Purpose |

|---|---|---|

| Phenotype capture | HPO ontology, clinical notes | Structured disease description |

| Initial sequencing | WES or WGS | Variant discovery |

| Transcriptomics | RNA-seq | Splicing and expression analysis |

| Variant scoring | CADD, SpliceAI, REVEL | Pathogenicity prediction |

| Population filtering | gnomAD, ClinVar | Allele frequency and classification |

| AI prioritization | Phenotype-driven ML tools | Candidate ranking |

| Functional assays | Cell-based and biochemical | Variant validation |

| Reanalysis triggers | Database update monitoring | Ongoing diagnostic yield |

Functional assays, model organisms, and reanalysis pipelines are not optional extras. They are the infrastructure that converts a candidate variant into a confirmed diagnosis. Reviewing the full set of rare disease treatment steps alongside your genomic toolkit helps align discovery workflows with downstream therapeutic planning from the start.

Pro Tip: Before launching sequencing, audit your phenotype data quality. A poorly coded HPO profile will misdirect every AI prioritization tool downstream, no matter how powerful the algorithm.

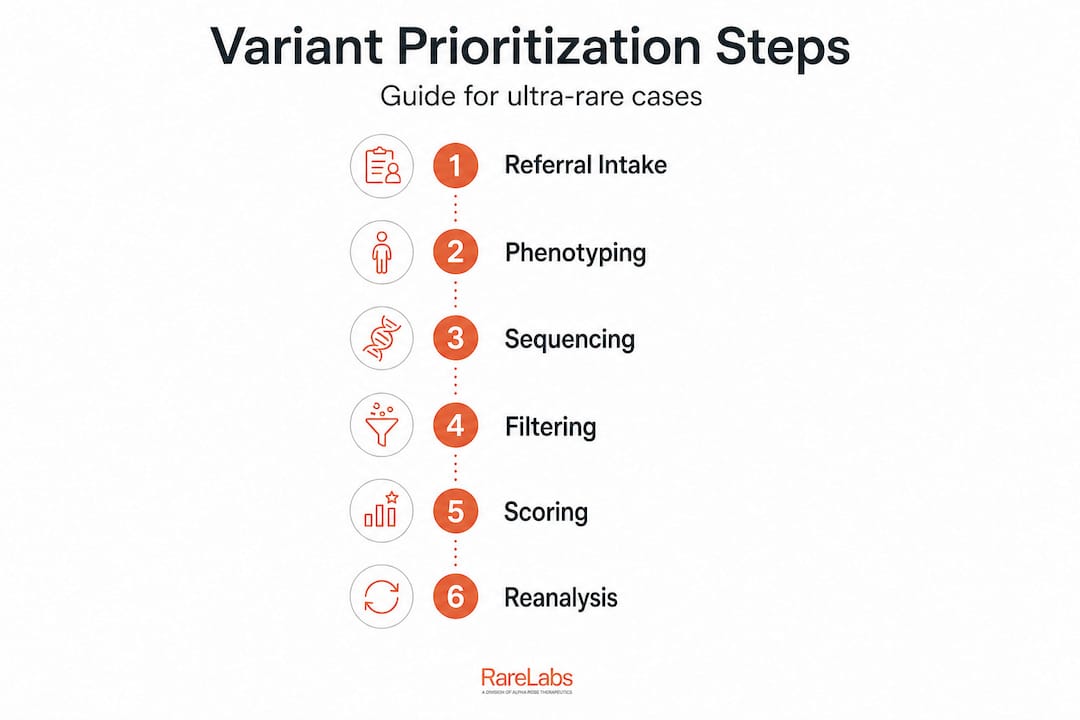

Step-by-step process: From patient referral to variant prioritization

Once resources are assembled, follow this stepwise roadmap.

1. Patient referral and intake. Collect complete clinical records, specialist notes, prior genetic test results, and family history. Establish whether the case is simplex, familial, or consanguineous, as this shapes sequencing strategy immediately.

2. Deep phenotyping. Translate clinical observations into structured HPO terms. Engage specialists across relevant organ systems. Photograph distinctive dysmorphic features. Run metabolomic and proteomic panels where indicated. The precision of this step directly determines the quality of every computational analysis that follows.

3. Genomic sequencing. Choose the sequencing modality based on prior testing history. For cases with prior negative WES, move directly to WGS or trio WGS to capture de novo variants and non-coding regions. Add RNA-seq from accessible tissues when splicing defects are suspected.

4. Variant calling and filtering. Apply quality filters, population frequency cutoffs, and inheritance model constraints. For recessive diseases in non-consanguineous families, compound heterozygosity must be phased correctly, either computationally or through parental sequencing.

5. Pathogenicity scoring and ACMG classification. Score candidates with validated in silico tools. Apply ACMG criteria systematically. Classify variants as pathogenic, likely pathogenic, VUS, likely benign, or benign. Document evidence transparently.

6. Iterative reanalysis. This is where many programs fall short. Reanalysis using AI/ML tools and ACMG guidelines boosts diagnostic yield in programs like UDN and TUDP substantially, with reanalysis alone accounting for up to 17% additional diagnoses in some cohorts. Schedule reanalysis every 6 to 12 months as ClinVar, gnomAD, and disease databases expand.

The following table contrasts traditional versus innovative methods at each major step.

| Research step | Traditional approach | Innovative approach |

|---|---|---|

| Sequencing | Gene panels, WES | WGS + RNA-seq, long-read sequencing |

| Variant prioritization | Manual expert review | AI/ML phenotype-driven ranking |

| Functional testing | Single-gene assays | iPSC-based parallel functional screens |

| Reanalysis | Ad hoc, case-by-case | Automated, database-triggered |

| Non-coding detection | Limited | WGS + RNA-seq + in silico modeling |

| Therapeutic translation | Academic collaboration | Parallel drug and ASO screening |

Edge cases deserve special attention. Non-coding variants affecting regulatory elements or deep intronic splicing are systematically missed by WES and even by many WGS pipelines configured without RNA-seq confirmation. Variants of uncertain significance (VUS) that appear benign today may be reclassified pathogenic as functional data accumulates. Building reanalysis checkpoints into your diagnostic steps for rare diseases workflow is not administrative overhead. It is a core scientific strategy.

Pro Tip: Set up automated alerts tied to ClinVar and OMIM update feeds for every VUS your program has returned. Reclassification events are clinically actionable and time-sensitive for families awaiting answers.

Innovative modeling and functional validation: Organisms, organoids, and in silico

After identifying likely variants, move to model validation and testing innovations.

Variant prioritization narrows the candidate list. Functional validation decides it. Modern rare disease research now draws on three complementary modeling paradigms, each with distinct advantages and real constraints.

Patient-derived iPSCs and organoids. Innovative disease modeling uses patient-derived induced pluripotent stem cells differentiated into organoids to recapitulate disease phenotypes and enable genotype-phenotype correlations, CRISPR correction, and therapy testing in a fully human cellular context. iPSC-derived neurons, cardiomyocytes, hepatocytes, and cerebral organoids can model tissues that are otherwise inaccessible from living patients. The major advantage is human specificity. The major constraint is time: generating and validating iPSC lines takes months and requires significant technical expertise.

Animal models. Improved AHC mouse models enable preclinical testing of gene editing therapies for nervous system disorders, demonstrating that well-designed animal models remain indispensable for pharmacokinetics, biodistribution, and toxicology data required by regulators. However, the biological distance between mice and humans means that phenotypes sometimes fail to translate, particularly for complex neurological or metabolic presentations. This is not a reason to abandon animal models. It is a reason to pair them with human-derived systems.

In silico and digital twin approaches. Computational modeling, including protein structure prediction with tools like AlphaFold, molecular dynamics simulations, and digital twin frameworks, can rapidly test the functional consequences of variants before any wet lab work begins. These approaches are fast and scalable. They work best as a prioritization layer rather than a standalone validation strategy.

"The most productive research programs we observe do not choose between iPSCs, animal models, and in silico tools. They sequence them strategically, using computational analysis to prioritize, organoids to confirm human relevance, and animal models to satisfy regulatory requirements for preclinical safety."

Functional validation workflow:

- Confirm variant expression and protein consequences through immunoblot, immunofluorescence, and transcriptomic analysis

- Use CRISPR isogenic correction in iPSC lines to establish causal relationships between variant and phenotype

- Run parallel treatment screens using FDA-approved compound libraries against patient-derived cellular models

- Apply antisense oligonucleotide (ASO) tiling across splice sites when splicing defects are confirmed

- Validate hits in secondary models before advancing therapeutic candidates

Exploring the principles behind genetic disease modeling and how disease modeling unlocks rare disease treatments provides additional context for integrating these platforms into a coherent discovery pipeline.

Therapeutic development: Gene therapy, trial design, and regulatory challenges

Validated models inform the development and testing of new therapies.

The translation from a functionally validated variant to a patient-accessible therapy is the hardest part of the entire process. For ultra-rare diseases, it is also the most urgent. The therapeutic development landscape now includes over 2,100 gene therapies in the global pipeline, with rare diseases representing the top non-oncology therapeutic target category. This is an extraordinary shift from a decade ago, when gene therapy was largely experimental.

Therapy modalities relevant to ultra-rare diseases:

- AAV-based gene replacement: Effective for loss-of-function variants where the coding sequence fits within AAV cargo limits. CNS delivery remains a significant obstacle due to the blood-brain barrier and immune response challenges.

- CRISPR gene editing: Increasingly practical for both ex vivo (correcting cells outside the body) and in vivo applications. Base editing and prime editing reduce off-target concerns compared to early CRISPR-Cas9 approaches.

- Antisense oligonucleotides (ASOs): Rapidly customizable for splice-switching or transcript knockdown. Custom ASOs designed from patient-specific splicing defects can move from design to patient dosing faster than any other modality in appropriate cases.

- Small molecule therapies: FDA-approved drug repurposing screens against patient iPSC models can identify unexpected therapeutic activity without the long development timelines of novel compounds.

Regulatory considerations:

The FDA's frameworks for n-of-1 trials using natural history data as the control arm have opened a genuine pathway for single-patient or micro-cohort studies in ultra-rare diseases. Key regulatory nuances include correct endpoint selection (biomarker-based versus clinical), "Goldilocks" cohort design where the patient population is large enough to generate meaningful data but not so heterogeneous that effect sizes are diluted, and the use of expanded access or compassionate use mechanisms to reach patients while formal trials are underway.

Major challenges:

- Manufacturing scale-up for patient-specific therapies

- Absence of validated natural history datasets for truly novel diseases

- Limited payer coverage evidence for n-of-1 treatments

- CNS delivery barriers for neurological rare diseases

- Long-term safety monitoring with tiny patient populations

Reviewing the range of genetic therapy options, understanding available gene therapy approaches, and applying a structured framework for evaluating gene therapy candidates will help your team navigate this complex landscape efficiently.

Pro Tip: Natural history data collection should begin the moment a patient enters your research program, not when a therapy candidate is identified. Regulatory reviewers will ask for it, and retrospective collection is always inferior to prospective documentation.

Why hybrid approaches and perpetual reanalysis unlock new answers

Let's take a critical look at how these integrated approaches are changing the field.

Here is a position worth defending: the biggest bottleneck in ultra-rare disease research is not technology. It is workflow architecture. The tools exist. WGS costs have dropped by orders of magnitude. iPSC protocols are increasingly standardized. AI prioritization platforms are commercially available. What still fails most programs is the failure to integrate these tools into a genuinely adaptive, perpetually updating workflow.

Perpetual reanalysis is not a nice-to-have. Variants classified as VUS in 2022 are being reclassified as pathogenic in 2026 at meaningful rates as functional databases accumulate evidence. Programs that reanalyze annually capture these reclassifications and deliver diagnoses to families who had been told there were no answers. Programs that treat genomic analysis as a one-time event leave those families waiting indefinitely.

The hybrid approach also resolves a false debate that has persisted too long in rare disease research: in silico versus wet lab. Computational modeling is fast and scalable but cannot replace the irreducible complexity of a living cell. Organoids are biologically precise but time-consuming and expensive to scale across a large variant list. The answer is not to choose. It is to sequence these platforms so that computational analysis filters the candidate space aggressively before any cellular resource is committed.

Non-coding variants and VUS reclassification represent the most underappreciated edge cases in the field right now. A significant fraction of undiagnosed patients carry pathogenic variants in regulatory regions, deep intronic sequences, or untranslated regions that standard pipelines never examine. RNA-seq layered onto WGS data is the most practical current solution, but AI models trained on non-coding variant effects are advancing rapidly and will soon provide a viable computational complement.

Digital twins and AI-driven adaptive research designs also reduce a bias that has historically distorted rare disease research: the bias toward variants in well-studied genes. Phenotype-driven AI tools rank candidates based on biological plausibility rather than publication frequency, which means genuinely novel disease genes get surfaced rather than buried beneath a pile of incidental findings in known disease genes. How FDA-approved drugs are transforming rare disease care through repurposing screens is a direct beneficiary of this shift toward unbiased, data-driven candidate selection.

The uncomfortable practical truth is that most rare disease programs are underinvesting in reanalysis and overinvesting in initial sequencing throughput. Getting sequencing right is necessary. Keeping analysis current is what actually delivers answers.

Connect with expert resources for rare disease research

If you are navigating an ultra-rare or undiagnosed genetic disease case and need to connect these research processes with actionable next steps, the gap between scientific insight and patient-specific action is exactly where the right platform makes a measurable difference.

RareLabs builds patient-derived iPSC models, runs parallel treatment screens across thousands of FDA-approved compounds and custom ASOs, and evaluates gene therapy options for cases that lack approved treatments. The RareLabs knowledge base provides curated scientific resources aligned with the research processes described here, from phenotyping standards to functional validation frameworks. When your team is ready to move from variant identification to therapeutic discovery, the RareLabs platform offers a structured, transparent, and scientifically rigorous path forward designed specifically for the complexity of ultra-rare disease research.

Frequently asked questions

What is the first step in researching an ultra-rare genetic disease?

Start by gathering comprehensive phenotypic data from the patient and family using structured HPO terminology, then proceed to advanced genomic sequencing. The research process begins with referral and phenotyping because sequencing interpretation depends entirely on the quality of clinical data provided.

How often should variant reanalysis be performed?

Variant reanalysis should be conducted at least annually, and ideally triggered by major database updates. Perpetual reanalysis is now considered a core practice rather than an optional follow-up, given the rate at which genetic databases accumulate new functional evidence.

What is the diagnostic yield for undiagnosed disease research programs?

Diagnostic yields reach 40 to 50% in pediatric cases after prior negative testing, with reanalysis boosting yields by up to 17% in programs like UDN and TUDP. These figures underscore how much is still recoverable even after an initial negative result.

Are animal models always necessary for functional validation?

Animal models provide irreplaceable data for preclinical safety and pharmacokinetics, but in silico and organoid approaches often capture human-specific disease complexity more faithfully for mechanistic studies. A hybrid strategy using both modalities is almost always superior to either alone.

What is an n-of-1 clinical trial in rare disease research?

An n-of-1 trial tests a therapy in a single patient, using natural history data as the comparative evidence base. This FDA framework for rare diseases is particularly important for ultra-rare conditions where assembling a conventional control cohort is logistically or ethically impossible.